[Spoiler alerts for Dawn of the Planet of the Apes. Go see it already.]

The post-apocalyptic sci-fi thriller Dawn of the Planet of the Apes is, for the most part, a meditation on ethics and morality. A good one, too. But that’s not what we’re here to talk about. Let others debate whether Caesar should have spared Koba. I’m here to analyze the human/ape conflict through the cold, rational lens of game theory.

[Disclaimer: Everything I know about game theory I learned from Wikipedia and hanging out with the writers of Overthinking It. Corrections and “well actually’s” are more than welcome in the comments.]

Prisoner’s Dilemma

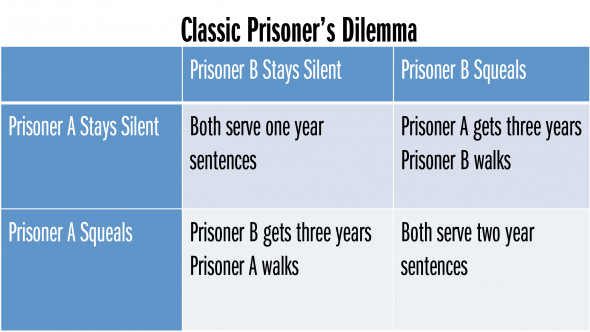

The human/ape conflict seems to lend itself quite well to the Prisoner’s Dilemma model, in which two opposing parties can choose whether or not to cooperate with each other. Here’s the classic scenario grid in case you need a refresher:

All of this boils down to what amounts to a cynical worldview: even though cooperation would yield the most positive outcome, each party nevertheless tends to act in their own self interest at the expense of the opposing party’s. When both parties choose to do so, neither achieves a positive outcome.

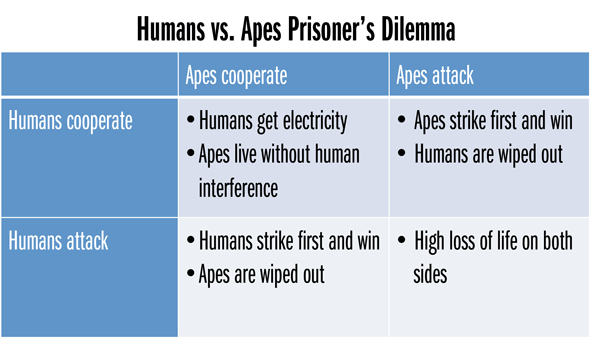

This model has been used to explain all sorts of conflicts between nations, so it’s no surprise that the human/ape conflict fits as well:

Sure enough, by the end of the movie, both parties find themselves in the least optimal bottom-right quadrant: lots of humans and apes die, with more fighting to come in the sequel.

This model works when you consider the conflict at a high level, but when you consider the events of the movie a little more carefully, the Prisoner’s Dilemma becomes less helpful at understanding the decisions that the human and ape leaders make. See, the key thing about the Prisoner’s Dilemma is that both sides make choices simultaneously and with no knowledge of what the other side is choosing (the ferry boat scene from The Dark Knight works well in this regard). That’s not the case in Dawn of the Planet of the Apes. Each side makes choices sequentially, in response to choices made by the other side or circumstances at hand.

Fortunately, Game Theory gives us another method to model this sort of scenario: decision trees.

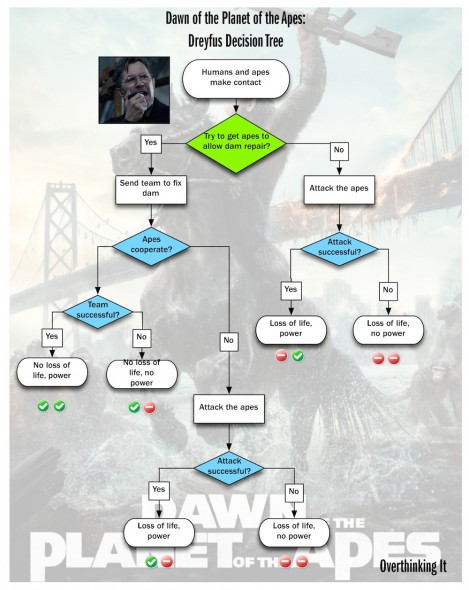

Decision Tree: Humans

Dreyfus, the leader of the human colony in San Francisco, needs electric power from the hydroelectric dam located in ape-controlled territory. In order to get it, he has a choice to make: he can either take control by force, or he can send a team to the dam and hope that the apes give them access.

What to do? Dreyfus needs to consider the costs and benefits of the possible scenarios and the likelihood of these scenarios occurring. In the decision tree graphic below, the green diamond represents a choice that Dreyfus actively makes. The blue diamonds represent circumstances out of Dreyfus’ control that influence the outcomes. Each outcome has a simple positive/negative indicator for two outcomes: loss of life and electric power.

Note that this graphic does not illustrate the probability of each scenario occurring. Dreyfus, with limited information on what the apes are capable of, probably doesn’t have ability to accurately assess the probability anyway.

So what does he do? He makes the only decision that could possibly lead to the most optimal outcome in terms of loss of life and electric power: he sends the team to repair the dam, in hopes that the apes cooperate. Granted, he keeps a contingency plan for war in his back pocket which arguably provokes Koba, but let’s be honest: Koba was looking for an excuse to start a fight, and it’s not like the humans were going to dispose of the arsenal for fear of provoking the apes. For all intents and purposes, Dreyfus acts in good faith. And it would have worked, if it weren’t for one damned dirty ape.

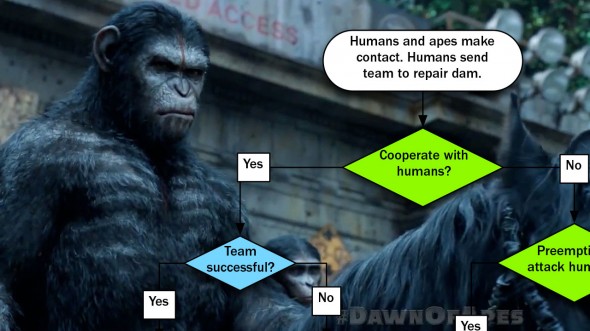

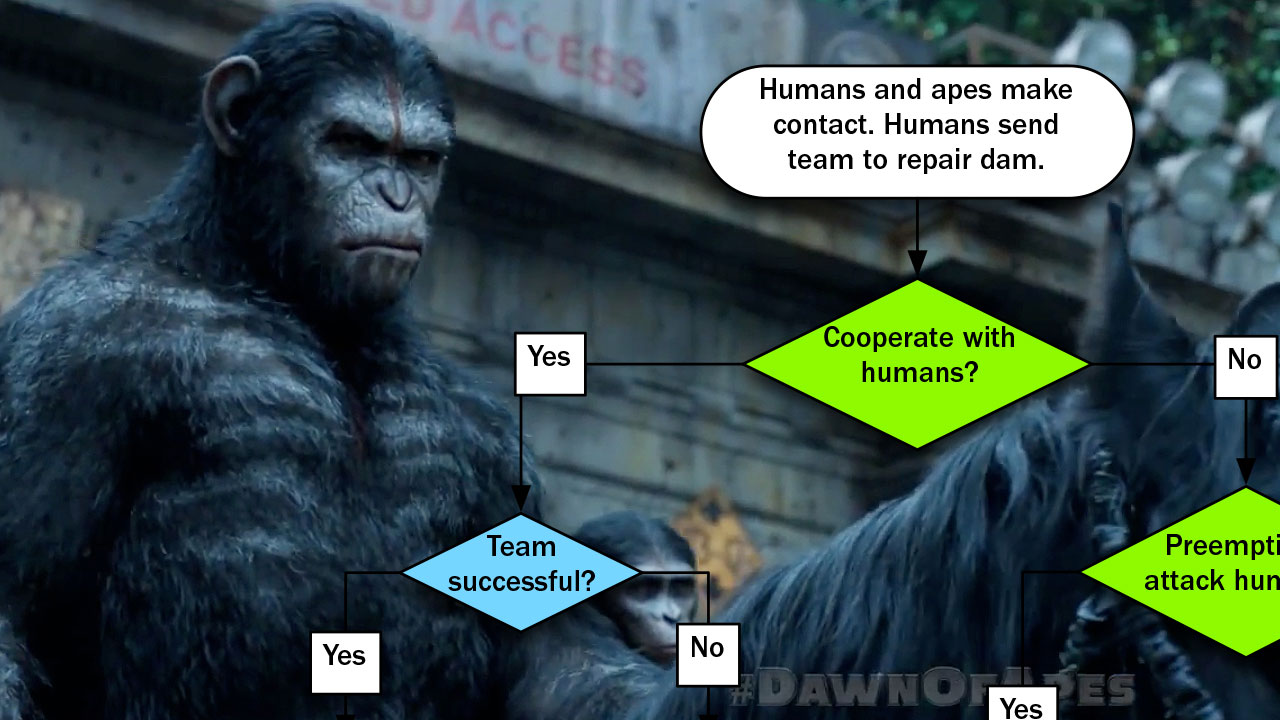

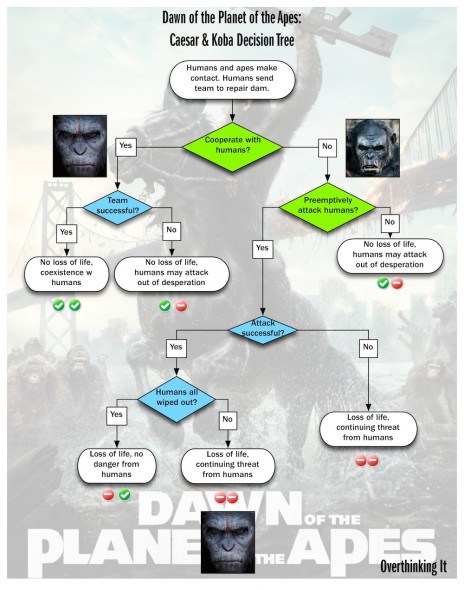

Decision Tree: Apes

Caesar, leader of the ape society, needs to choose how to deal with the humans who show up asking for access to the dam. Does he cooperate with them, or does he send them away?

Just like Dreyfus, Caesar also makes the only decision that could possibly lead to the most optimal outcome in terms of loss of life and peaceful coexistence with humans. Caesar unambiguously acts in good faith–he has no contingency plan–and it would have worked, if it weren’t for Koba’s coup d’ape-tat.

Koba, now in charge, reverses Caesar’s initial decision to cooperate with the humans. He decides to preemptively attack them, but he does so with a fatal flaw in his plan: he doesn’t take into account the possibility that more humans aside from those in the colony might be provoked by the ape attack and prolong the conflict. Had Koba considered all of the possibilities, he would have seen that a suboptimal outcome would likely result from his attack. Unfortunately, Koba was blinded by his hatred, and he made the wrong choice.

And that’s what this movie is really about, right? Rational decision making. Acting based on careful consideration of costs and benefits, not hunches and grudges.

At the end of the movie, Caesar sends Koba to his death after passing judgment on him:

“KOBA NOT APE.”

That may or may not be true, but perhaps a more fitting epithet might have been:

“KOBA NOT EFFECTIVE MANAGER.”

I’m glad to see that the labels on the ‘Prisoner’s Dilemma’ four-blocker are still incorrect (they were screwed up on the Les Mis one as well). :)

While I’m a huge fan of PD style game theory in movies, even though it tends to be executed in a rather heavy handed manner, I think it’s even more exciting in sports – see the recent World Cup match between the USA and Germany where the ‘cooperate’ choice would have guaranteed that they both advance. While this example isn’t perfectly pure (it ignores seeding in future rounds), it was still wonderful to ponder. And while Germany still won by a score of 1-0, both teams kind of sat on their hands and didn’t push too hard.

D’oh! Thanks for pointing that out. It’s fixed now…right?

This was an enjoyable read. It’s easy to complain about how mindless summer blockbusters have become, that I really enjoy when a film like DotPofA can be successful.

I also loved the disclaimer at the beginning and the phrase “well actuallys.” There was a Contrarian of the Highest Order in my film studies class who began every contribution to any discussion with that phrase. I see his face whenever I read a comment on a message board that begins with those two words.

We earnestly welcome the “well actually’s,” as long as they’re good-natured and not done to suppress discussion or demonstrate superiority for superiority’s sake (as may have been the case with your Contrarian classmate). It’s our way of “taking back” the phrase and making this a safe space for pedantry ;-).

Slightly off-topic, but I got a huge kick out of this news segment about chimpanzees attending a screening of the movie:

https://www.youtube.com/watch?v=ThfQTqNPiIA

Apparently some were concerned that the chimps would view it as an instructional film on world domination.

I think a major problem with the humans’ strategy is that they really offered no positive benefit for the apes to cooperate. As the chart indicates, the only thing on the table is “we’ll leave you alone”, which was precisely the situation the apes were in before the humans arrived. If the humans had actually negotiated something more substantial in exchange, such as food, tools, medicine, or other positive goods, not only would the apes have been generally more inclined to help, but Koba’s (or any other ape’s) later usurpation would have been less likely, as it would have meant foregoing tangible benefits.

Ultimately, the consequences arose because the humans didn’t actually treat the apes as worthy negotiating partners, but as impediments.

Ok, here’s a problem. When you translate from the simultaneous move game to the decision trees “coexistence with no loss of life” is a better outcome then “successful preemptive attack”.

If this is case, you wouldn’t have a prisoners dilemma since the apes would have no incentive to attack if the humans cooperate. (Attacking may still be optimal play depending on the humans, but it’s not a prisoner’s dilemma.)

If this is not the case, then choosing to not cooperate is the ape’s only option possible to get them to their most optimal outcome. (Cooperation could still be the optimal decision if you eliminate successful preemptive attack as a possibility.)

You made cooperation optimal in the second formulation (which isn’t really a game any more, but whatever) just by changing the ape’s preferences not by a change of approach.

Also, if Koba hates the human’s, then cooperation might be less desirable than a failed preemtive attack. In which case you have to consider that his incentives are different from ape cultural in general.

Really sorry to be a huge stick in the mud.

Mark, you wrote: “All of this boils down to what amounts to a cynical worldview: even though cooperation would yield the most positive outcome, each party nevertheless tends to act in their own self interest at the expense of the opposing party’s.”

That’s not quite a correct understanding of the Prisoners’ Dilemma. The important result of the PD analysis is that it is individually rational for each player to defect. The reason why is that no matter what your opponent does, you individually always do better under those circumstances by defecting.

In fact, in this particular problem human nature gives us an all-too-rare opportunity not to be cynical. In experiments involving (non-iterated) PD setups people frequently do cooperate, indicating that not-strictly-rational(*) concepts like fair play do inform people’s decisions, even in a straightforward situation like the PD.

(*) That’s the standard interpretation, anyhow. I think you can make a strong argument that no interaction, however carefully constructed, is truly isolated and that participating in a culture of “fairness” (including enforcing norms through sanctions whenever the opportunity arises) is actually pretty rational from an individual point of view. Together those observations imply that cooperating in experiments like PD or “The Ultimatum Game” is one move in a rational strategy for the larger string of interactions that comprises our daily lives.